Agentic AI Systems

Frameworks and patterns for orchestration, tracing, evaluation, and tool-using workflows.

Senior Machine Learning Engineer

I build machine learning systems that actually solve real problems. My background spans academic research on video summarization at CERTH-ITI and deploying ML at scale in enterprise settings. I enjoy turning complex research into practical, production-ready solutions. When I'm not training models, you'll find me analyzing the latest Formula 1 race or hunting for the perfect freddo espresso. 🏎️☕

Thessaloniki, Greece

Apr 2026 – Present

Mar 2025 – Mar 2026

Building a multi-agent AI system analyzing large volumes of customer feedback data, including millions of survey responses and chat transcripts, to identify trends and summarize experiences automatically.

Thessaloniki, Greece

Jun 2024 – Feb 2025

Led development of critical components for an enterprise-scale Business Continuity Platform at a major energy corporation. Built notification systems for operational issues, designed scalable PySpark data pipelines on Azure Databricks, developed FastAPI REST APIs, and collaborated on system architecture.

Sep 2023 – Jun 2024

Developed AI-powered assistance systems that automated workflows and boosted operational efficiency. Created advanced analytics frameworks for real-time performance metrics. Engineered solutions for automated presentation generation and intelligent travel assistance.

Greece

Sep 2022 – Jun 2023

Mandatory Military Service. Honorable Distinction: Lance Corporal.

Thessaloniki, Greece

Jan 2021 – Sep 2022

Research on video summarization using Encoder-Decoder architectures with Attention Mechanisms and Transformers. Developed open-source PyTorch implementations. Explored GANs, LSTMs, and VAEs for generative summarization models.

Thessaloniki, Greece

Oct 2015 – Oct 2020

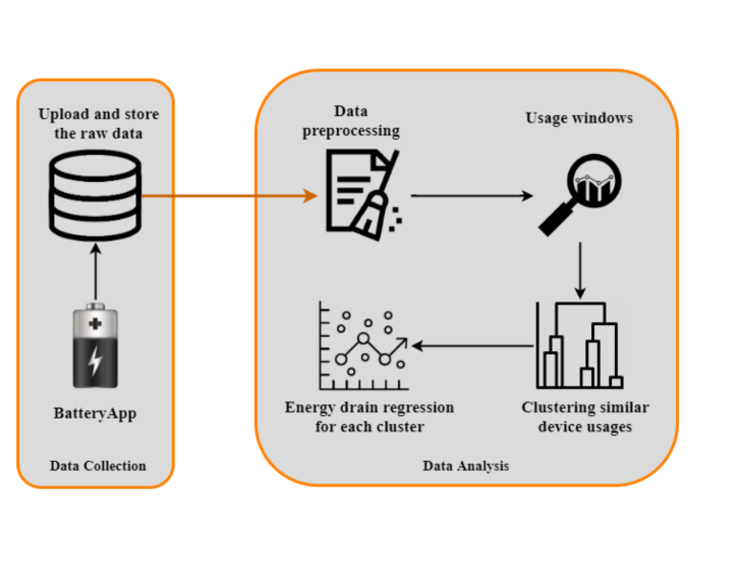

Specialized in Artificial Intelligence and Machine Learning, graduating in the top 6% of my class with a grade of 8.80/10. My academic interests spanned Software Engineering and Embedded Systems. My thesis focused on data collection and analysis of mobile phone energy consumption using Machine Learning techniques.

IEEE Int. Symposium on Multimedia (ISM) 2022 · Dec 2022

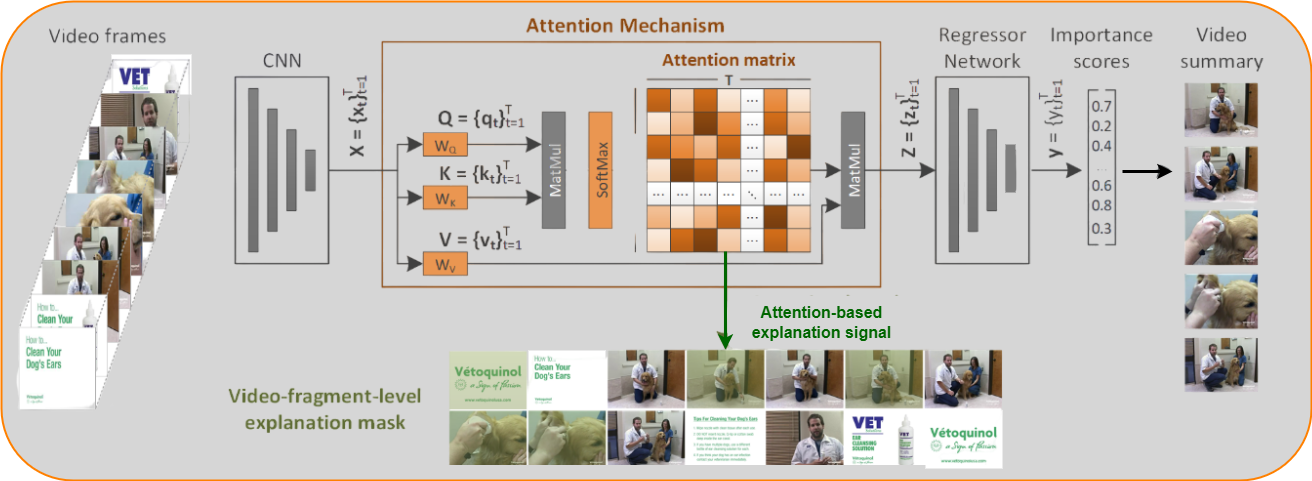

... citationsIn this paper we propose a method for explaining video summarization. We start by formulating the problem as the creation of an explanation mask which indicates the parts of the video that influenced the most the estimates of a video summarization network, about the frames' importance. Then, we explain how the typical analysis pipeline of attention-based networks for video summarization can be used to define explanation signals, and we examine various attention-based signals that have been studied as explanations in the NLP domain. We evaluate the performance of these signals by investigating the video summarization network's input-output relationship according to different replacement functions, and utilizing measures that quantify the capability of explanations to spot the most and least influential parts of a video. We run experiments using an attention-based network (CA-SUM) and two datasets (SumMe and TVSum) for video summarization. Our evaluations indicate the advanced performance of explanations formed using the inherent attention weights, and demonstrate the ability of our method to explain the video summarization results using clues about the focus of the attention mechanism.

ACM Int. Conference on Multimedia Retrieval 2022 · Jun 2022

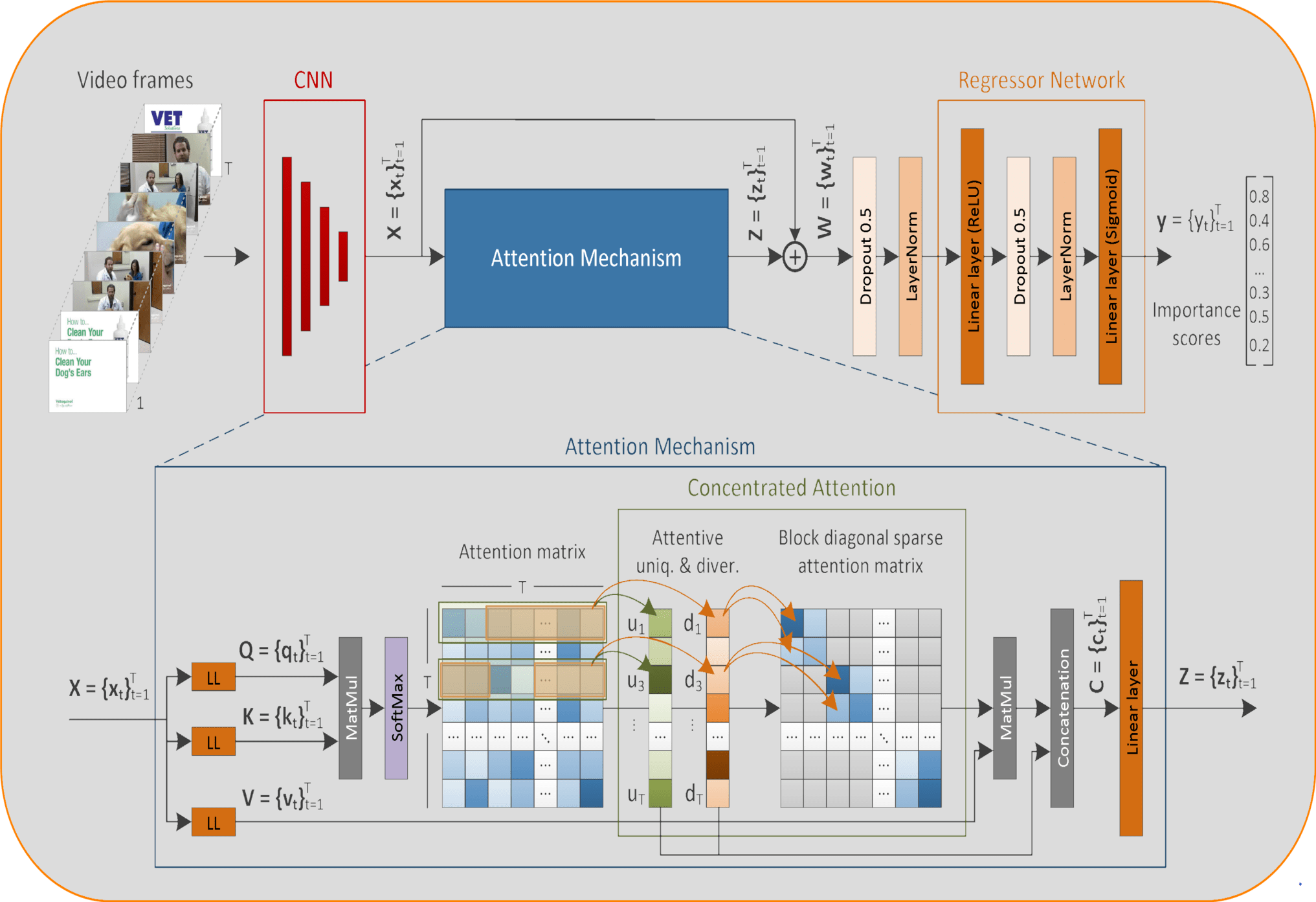

... citationsIn this work, we describe a new method for unsupervised video summarization. To overcome limitations of existing unsupervised video summarization approaches, that relate to the unstable training of Generator-Discriminator architectures, the use of RNNs for modeling long-range frames' dependencies and the ability to parallelize the training process of RNN-based network architectures, the developed method relies solely on the use of a self-attention mechanism to estimate the importance of video frames. Instead of simply modeling the frames' dependencies based on global attention, our method integrates a concentrated attention mechanism that is able to focus on non-overlapping blocks in the main diagonal of the attention matrix, and to enrich the existing information by extracting and exploiting knowledge about the uniqueness and diversity of the associated frames of the video. In this way, our method makes better estimates about the significance of different parts of the video, and drastically reduces the number of learnable parameters. Experimental evaluations using two benchmarking datasets (SumMe and TVSum) show the competitiveness of the proposed method against other state-of-the-art unsupervised summarization approaches, and demonstrate its ability to produce video summaries that are very close to the human preferences. An ablation study that focuses on the introduced components, namely the use of concentrated attention in combination with attention-based estimates about the frames' uniqueness and diversity, shows their relative contributions to the overall summarization performance.

IEEE Int. Symposium on Multimedia (ISM) 2021 · Dec 2021

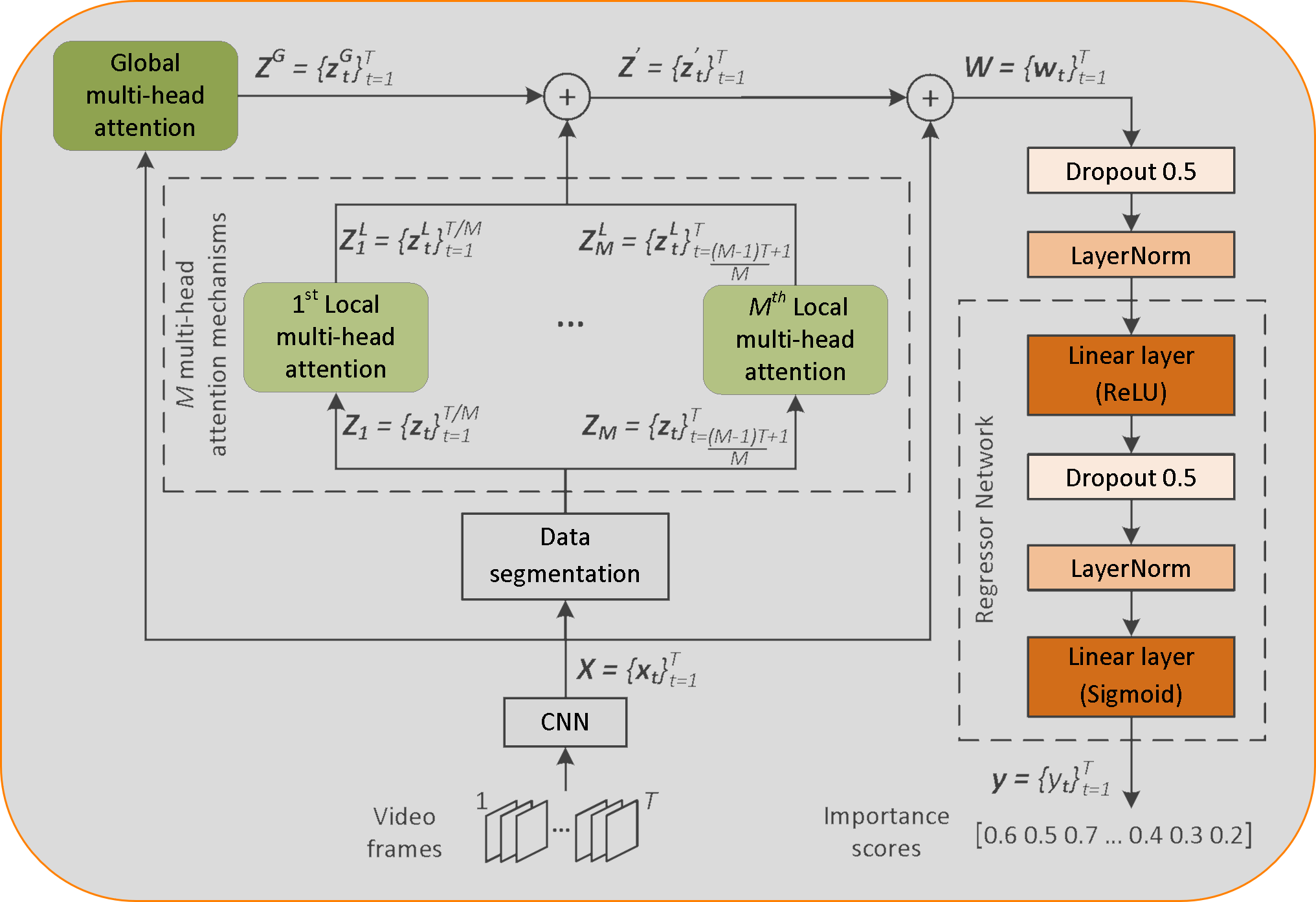

... citationsThis paper presents a new method for supervised video summarization. To overcome drawbacks of existing RNN-based summarization architectures, that relate to the modeling of long-range frames' dependencies and the ability to parallelize the training process, the developed model relies on the use of self-attention mechanisms to estimate the importance of video frames. Contrary to previous attention-based summarization approaches that model the frames' dependencies by observing the entire frame sequence, our method combines global and local multi-head attention mechanisms to discover different modelings of the frames' dependencies at different levels of granularity. Moreover, the utilized attention mechanisms integrate a component that encodes the temporal position of video frames - this is of major importance when producing a video summary. Experiments on two datasets (SumMe and TVSum) demonstrate the effectiveness of the proposed model compared to existing attention-based methods, and its competitiveness against other state-of-the-art supervised summarization approaches. An ablation study that focuses on our main proposed components, namely the use of global and local multi-head attention mechanisms in collaboration with an absolute positional encoding component, shows their relative contributions to the overall summarization performance.

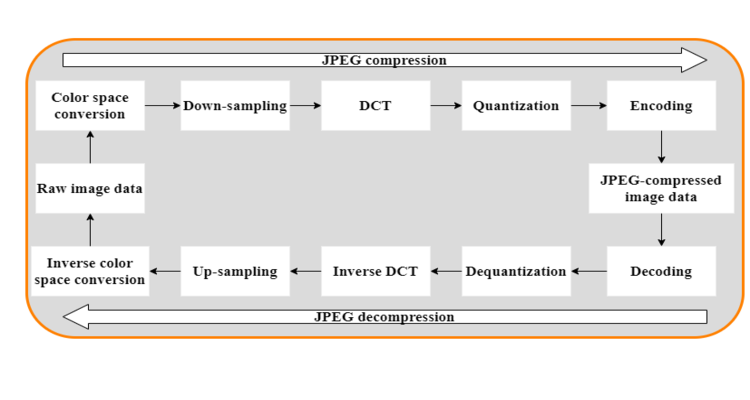

Implementation of JPEG standard (ISO/IEC 109181:1994)....

My own Unix shell implementation in C....

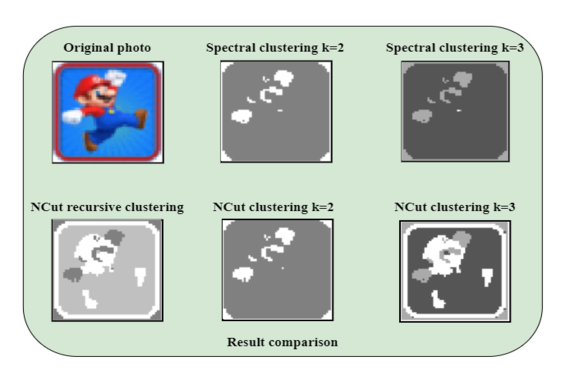

Image segmentation using graph-based clustering algorithms....

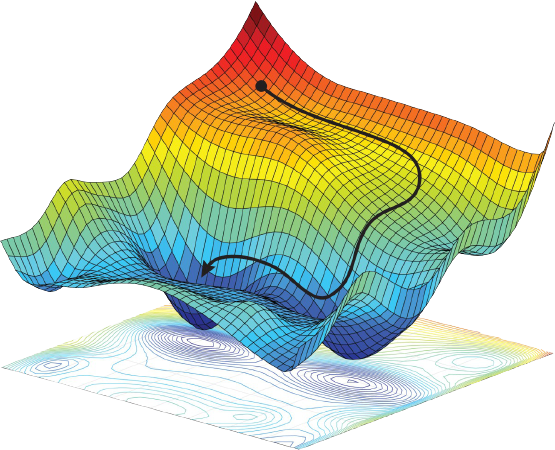

Implementation of various optimization algorithms for findin...

Raspberry Pi 0 communication over Wi-Fi with minimal energy ...

Data Collection and Analysis of Energy Consumption of Mobile...

Skillset

Frameworks and patterns for orchestration, tracing, evaluation, and tool-using workflows.

Core modeling and applied ML work, especially around deep learning, NLP, and production operations.

The platform layer for data pipelines, services, storage, and cloud-native delivery.

Tooling that keeps systems versioned, deployable, and visible once they are live.

Norris wins his first world title by two points, McLaren sec...

Verstappen secures a fourth title, McLaren win their first c...

Verstappen's third title, Red Bull win 21 of 22 races, McLar...

Red Bull dominance, Ferrari mistakes, Max secures his second...

Intense battles, Verstappen vs Hamilton, Max clinches his fi...

Challenging season, Covid‑19 limiting spectators, Hamilton's...

Feel free to reach out for collaborations, questions, or just to say hello! Whether you want to discuss Formula 1 races or grab a coffee ☕ — I'm always happy to connect! 🏎️